Full control over how your AI behaves.

Fine-tuned LLMs on your data, your rules, your hardware.

Delivered within 72 hours.

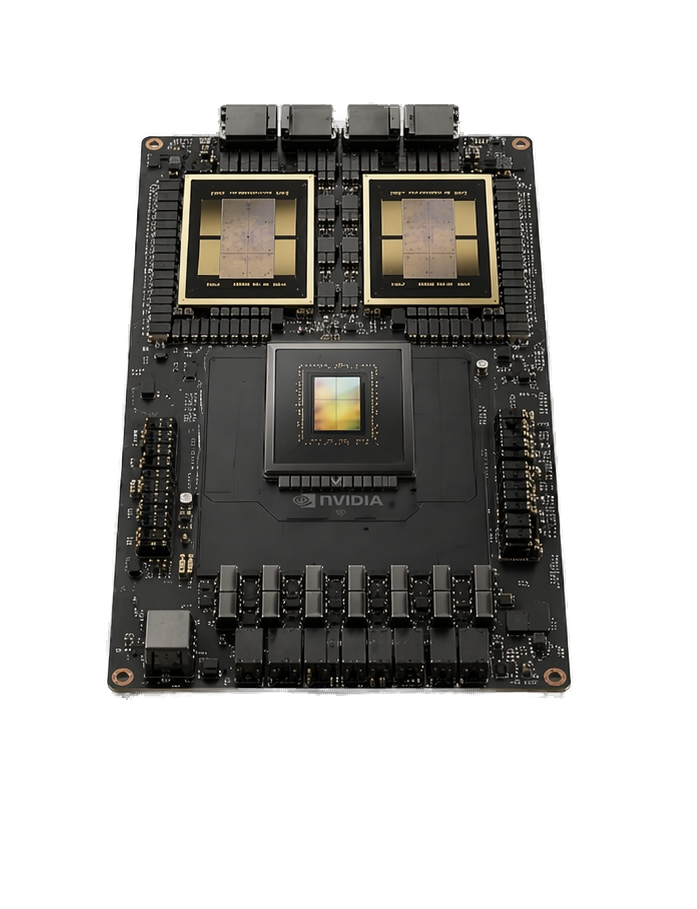

Trained and served on Grace Blackwell, air-gapped from the internet.

Rented intelligence, or owned behavior.

Cloud LLMs are rented. Every call depends on a vendor's pricing, policy, and release schedule you don't control. A fine-tuned model is yours — you dictate the behavior.

What it knows

Trained on your documents, contracts, ticket history, and internal policies.

How it speaks

Your terminology, your format, your refusal phrasing, your brand voice.

Where it runs

On your hardware, our hardware, your cloud — anywhere you choose.

Who sees the data

Zero third-party calls, zero training-data exfiltration, zero vendor logging.

What it won't do

Your content policy, your compliance rules, your brand limits — not ours.

What it costs

One-time fine-tune plus marginal inference cost. Not per-token forever.

Open-weight foundation models, NVIDIA silicon, standard deployment targets.

Five models, shipped and reproducible.

Every model below is live on Hugging Face, trained end-to-end on our own hardware, and documented with real benchmarks. Four LLM fine-tunes and one multimodal brain-engagement encoder. No vaporware, no roadmap slides.

Legal Contract Q&A

Due-diligence, clause extraction, and risk review across NDAs and MSAs.

Customer-Service Agent

Support-ticket triage and on-brand response drafting at scale.

Text-to-SQL Translator

Natural-language database queries across unseen schemas.

Legal Contract Q&A — Premium

The same task with more capacity — deeper reasoning on unusual clauses.

Brain Engagement Encoder

fMRI BOLD-signal prediction from video + audio — for ad, film, and UX testing.

From contract to production in 72 hours.

Every engagement follows the same pipeline. Every step is automated, every output auditable, every measurement reproducible. Nothing proprietary — you could run this pipeline yourself if you wanted. We just do it faster.

Data intake

JSONL, CSV, PDF, chat transcripts, Slack / Zendesk / Intercom exports. We handle the ingest.

Schema + PII redaction

Normalized to chat schema, PII redacted automatically before anything touches training.

Quality filter + dedup

MinHash deduplication, quality threshold enforcement, bad-row rejection with audit log.

Optional domain enrichment

Local on-prem models expand your corpus. Zero data to third parties. Opt in per engagement.

QLoRA training

On Grace Blackwell silicon, packed sequences, state-of-the-art training recipe.

DPO safety alignment

Refusal behavior and brand voice baked into the LoRA weights — not just a filter on top.

Automated benchmark

Academic, domain, performance, cost, and safety — all 6 sections run and reported.

Red-team verification

50-prompt attack suite. 70%+ block rate required before any adapter ships.

NVFP4 + EAGLE-3 deploy

State-of-the-art inference stack — every acceleration NVIDIA ships, running together.

Hugging Face push

Private or public repo. You own the weights. You can export, re-host, or modify forever.

Handoff

Endpoint keys delivered if Pylox-hosted — or adapter file shipped if you're running self-host.

The stack your model runs on.

Four pieces of NVIDIA's 2026 inference path, running together in one vLLM build.

Grace Blackwell

128 GB unified memory across CPU and GPU. Tensor cores run 4-bit math natively, with no conversion cost.

NVFP4 weights

4-bit floating point. Weights occupy a quarter of the memory a 16-bit checkpoint would, and the tensor cores process them directly.

EAGLE-3 speculative decoding

NVIDIA and RedHatAI draft heads. The model predicts several tokens ahead and verifies them in one forward pass.

FlashInfer kernels

Attention and GEMM kernels tuned for Blackwell. They ship together with NVFP4 in the same vLLM build.

Real throughput depends on prompt length, batch size, and traffic shape. We measure it on your workload and include the number in your engagement proposal.

Throughput you can put in an SLA.

Every figure is measured on our own Grace Blackwell hardware — the same machine your fine-tune trains and serves on. NVFP4 quantization paired with EAGLE-3 speculative decoding pushes every adapter far past its baseline.

Throughput depends on the GPU and its clock configuration — low end is entry Blackwell (GB10-class / L40S), high end is B200-class. The "measured" column is single-user, short-prompt throughput on our own Grace Blackwell. NVFP4 weights paired with EAGLE-3 speculative decoding is the fastest generally-available dense-model inference stack as of April 2026. Your real number depends on your hardware, prompt length, batch size, and traffic shape — we measure it during the consultation and put it in the engagement proposal.

Once a workload hits steady, high-volume traffic, self-hosted inference on owned hardware runs far below metered API pricing. Your break-even point depends on your traffic shape — quiet, bursty workloads stay closer to parity; sustained heavy traffic is where the gap widens.

- Fixed hardware cost — volume can grow without linear cost growth.

- No per-token metering, no rate-limit surge pricing.

- Data never leaves your network — before the first request is billed.

Your model beats your current baseline, or your money back.

We agree on the evaluation set and the minimum delta before work begins. If the shipped adapter doesn't clear the bar on the agreed benchmark, the engagement fee is refunded in full. No hedging, no footnotes, no clawback period.

Pick the silicon. Keep the weights.

All tiers ship with DPO safety alignment, a runtime safety gateway, and a red-team verification report. You own the adapter weights outright — no lock-in, no revenue share, no license recall.

- Any base model under 70B — Llama, Qwen, Gemma, Mistral, or your choice

- Any data, any use case, any format

- Self-host or Pylox-hosted

- Full pipeline — train, benchmark, safety, deploy

- Perfect for experiments and exploratory fine-tunes

- Self-host or Pylox-hosted

- NVFP4 + EAGLE-3 inference stack

- DPO safety alignment baked in

- Runtime safety gateway included

- Refresh on-demand

- Self-host or Pylox-hosted

- NVFP4 + EAGLE-3 inference stack

- DPO safety alignment included

- Full domain benchmark harness + report

- Red-team verification report

- Self-host or Pylox-hosted

- NVFP4 + EAGLE-3 inference stack

- DPO safety alignment included

- Extended red-team + S2 safety audit

- Dedicated account engineer

All tiers · data never leaves your hardware · adapters you own

Your silicon, your server room, your data.

For clients who need true on-prem — we bring the hardware, install it in your server room, train your model, and walk out. All inference runs on your box, behind your firewall, forever.

DGX Spark installed on-site

Grace Blackwell GB10 with 128 GB unified memory. Runs up to 70B fine-tunes with NVFP4 + EAGLE-3 acceleration. Sits in your server room forever — not rented, not subscription-locked.

Air-gapped training handoff

Encrypted drive pickup from your site. Training on our Grace Blackwell — never touches the internet. Drive and fine-tune returned in person with chain-of-custody documentation and wipe certificate.

Nationwide coverage

South Florida (Miami-Dade, Broward, Palm Beach): no travel fee, one-hour on-site emergency response.

Anywhere else in the USA: installation included, travel billed at cost.

Law firms. Hospitals. Hedge funds. Family offices. Wealth managers. The "this can never touch OpenAI" crowd.

Book a Sovereign Edge consultationDefense in depth, documented per model.

Safety isn't a toggle — it's a stack. Every engagement ships with all three layers configured, tested, and reported in writing.

Training-time DPO alignment

Refusal behavior baked into the LoRA weights during fine-tune. The model is taught what to decline before it ever sees production traffic.

Runtime safety gateway

Meta Prompt Guard 2 (GPU-pinned) plus Llama Guard 3 sit in front of every inference. Prompt-injection, jailbreaks, and category violations are blocked before your adapter is called.

Red-team verification

A 50-prompt attack suite runs against every shipped model. We require a ≥70% block rate. The full report is handed to you with the adapter.

SLA-backed operators, not a support queue.

Every ticket routes through the team that built your model. No offshored call center. No LLM chatbot triage. No tier-one handoff.

- Sev-1Next business day

- Sev-23 business days

- ChannelEmail only

- Coverage9am–6pm ET · Mon–Fri

- Sev-1Within 4 business hours

- Sev-21 business day

- ChannelEmail + Slack Connect

- Coverage8am–8pm ET · 7-day sev-1

- Sev-11 hour · 24 / 7 / 365

- Sev-24 hours

- ChannelSlack + direct phone line

- CoverageOn-call rotation · named architect

Questions buyers always ask.

Ready to forge your private model?

Send the dataset you want to fine-tune on, the compliance constraint you're trying to solve, or the compute budget you've already approved. You'll get a scoped response the same day.